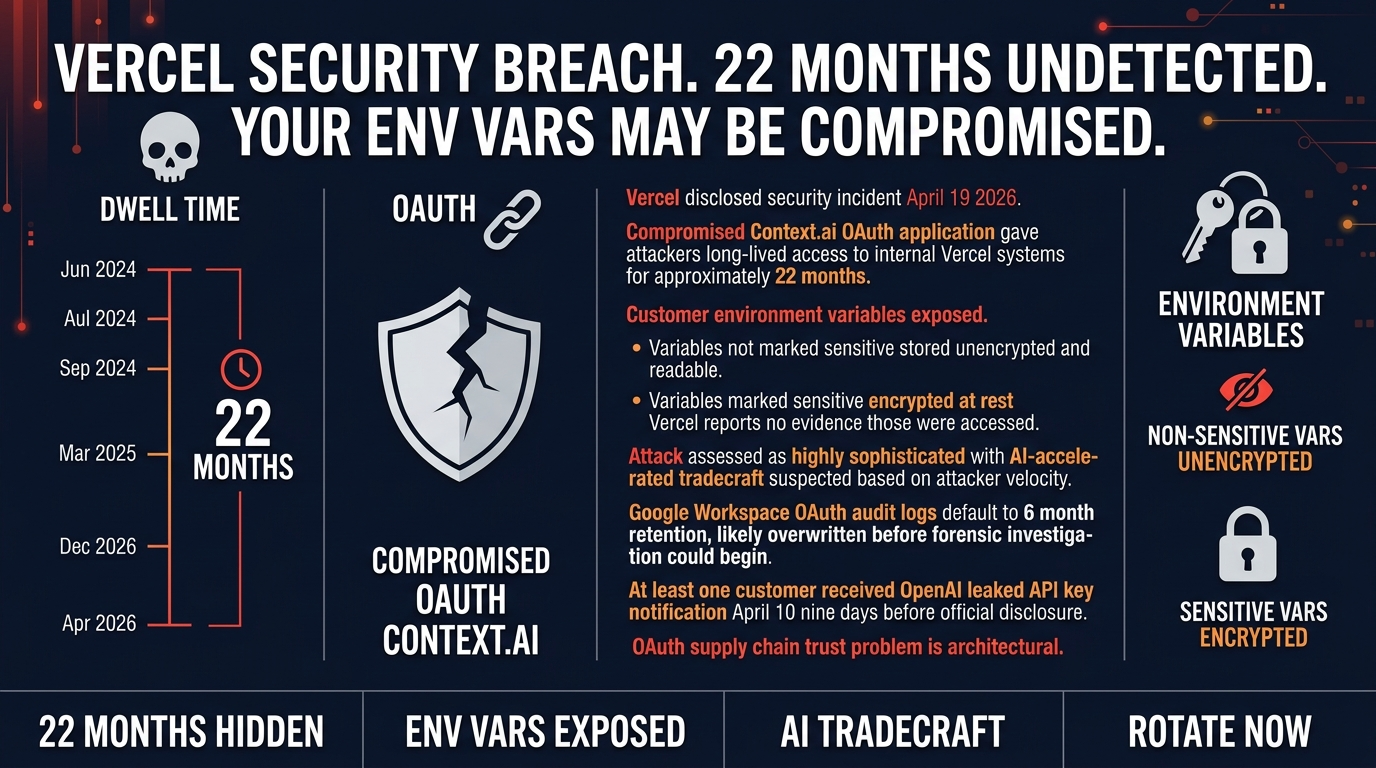

22 months. That’s how long an attacker had access to Vercel’s internal systems before anyone noticed. If you’re running anything on Vercel, that number should concern you more than whatever model dropped this week.

Vercel disclosed a security incident on April 19th. A compromised third-party OAuth application (Context.ai, an AI analytics tool) gave attackers long-lived access to internal systems. Customer environment variables were exposed for a limited subset of users. Vercel’s CEO Guillermo Rauch publicly attributed the attacker’s “unusual velocity” to AI-accelerated tradecraft. This is one of the first high-profile examples of that claim in the wild.

Here’s the part that should make you stop scrolling.

One customer received an OpenAI API key leak notification on April 10th, nine days before Vercel publicly disclosed the breach. Credentials were circulating before anyone knew to look. That’s the actual risk here, not the breach itself but the gap between compromise and notification.

What Actually Happened

The attack started with Context.ai, a legitimate OAuth application. A Vercel employee’s account was compromised through it, and attackers pivoted from there to their Google Workspace account, then to internal Vercel systems and customer environment variables.

Variables marked “sensitive” were encrypted at rest and Vercel says no evidence those were accessed. Variables not marked sensitive? Stored unencrypted, readable with internal access. That’s the control that mattered. If your variables weren’t marked sensitive, treat them as exposed.

But here’s the thing. The 22-month dwell time is the part nobody is talking about enough. Google Workspace OAuth audit logs default to 6-month retention. By the time forensic investigators started digging, the logs that would show exactly what happened in the first 6 months were already gone. That’s not a coincidence. That’s an attacker who knew the log retention policy and planned accordingly.

Why Rauch’s AI-Accelerated Tradecraft Claim Matters

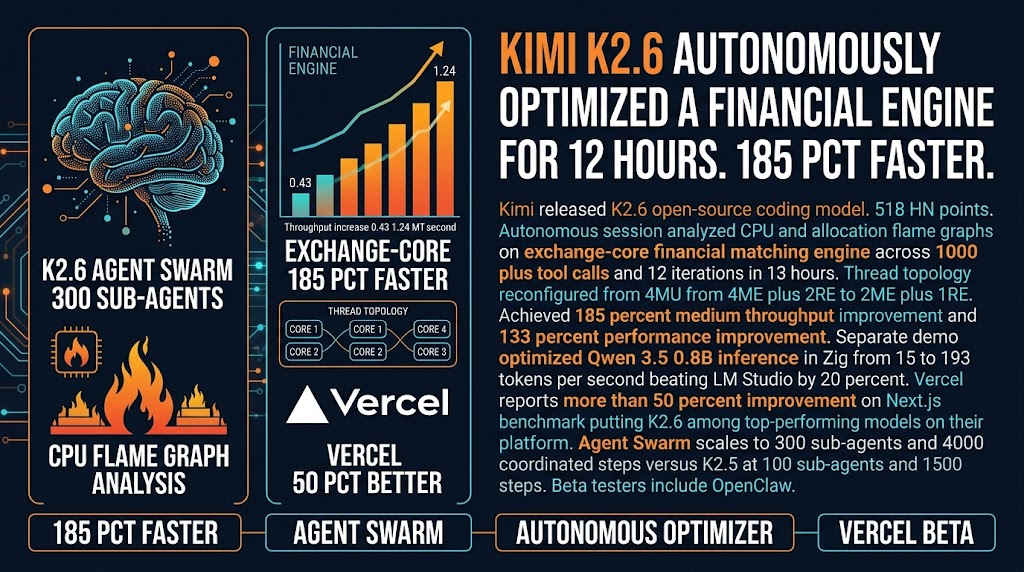

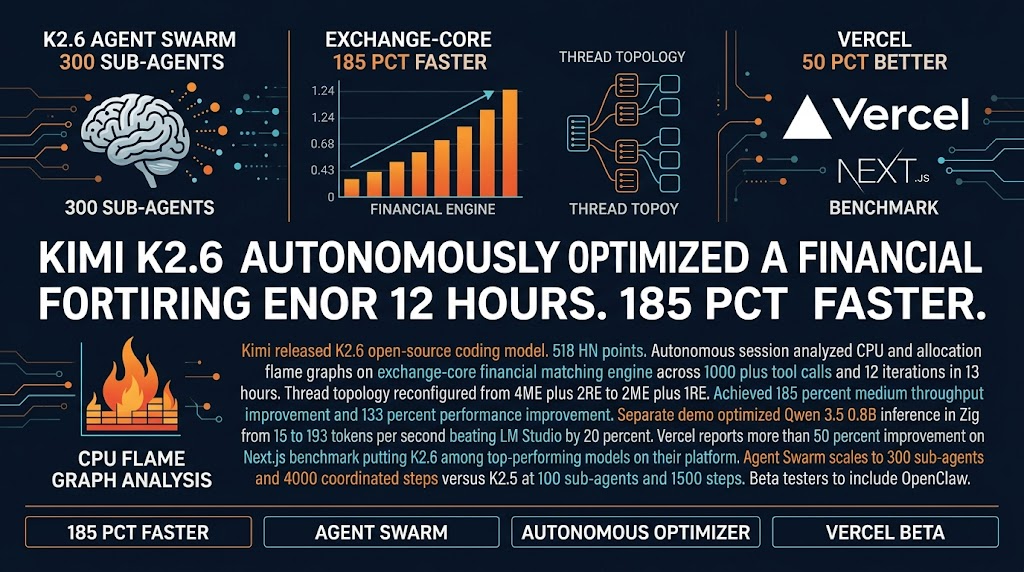

Rauch said the attacker moved with “unusual velocity” and attributed that to AI. He’s probably right, and that’s a wake-up call for anyone doing security planning in 2026.

The tradecraft progression typically goes reconnaissance, initial access, lateral movement, privilege escalation, data access. AI compresses every step. Faster reconnaissance, faster vulnerability identification, faster lateral movement decisions. An attacker who used to spend weeks mapping a target’s infrastructure can now do it in hours.

Here’s the thing nobody wants to say out loud. If you’re still doing security planning based on 2024 threat models, you’re already behind. Assume your adversaries have AI tools. Assume they move faster than you expect. The dwell time in this breach (22 months) suggests the bottleneck wasn’t speed, it was patience. AI handles the tedious parts, humans make the high-level decisions.

For small agencies and solo operators, the implication is uncomfortable. Your detection capabilities need to match the threat, and “matching the threat” now means AI-augmented defense, not just reactive incident response.

What You Should Do Right Now

First, if you’re on Vercel and have environment variables set before April 2026, rotate them today. Not this week, not “when you get to it.” Today. Treat any non-sensitive variable as potentially exposed. This includes API keys, tokens, and any credential stored in your Vercel environment. The exposed credentials circulating before notification means you should assume the worst until proven otherwise.

Second, if you received an OpenAI leaked API key notification on or around April 10th, that connection to this breach isn’t coincidence. Assume it was related and act accordingly. Rotate that key, check your usage logs for any unauthorized calls, and monitor for any unusual activity in connected systems.

Third, audit your OAuth apps. Not someday, now. Go to your Google Workspace admin panel, your GitHub organization settings, your Vercel team settings, and review every connected OAuth application. If you don’t recognize it or don’t use it actively, revoke access. The attack started with a legitimate OAuth app that gave persistent access to a corporate account. You need to know what’s connected to your accounts.

The OAuth Supply Chain Problem Nobody Fixes

Here’s the uncomfortable truth about this breach.

OAuth applications are how the modern developer works. Connect your GitHub, connect your Google Workspace, use some analytics tool, use some CI integration. Each connection is a potential access point that bypasses most traditional security controls.

The attack worked because a legitimate, authorized OAuth app gave persistent access to a corporate account without triggering standard detection. Context.ai wasn’t malicious. It was compromised. That’s a different threat model than “don’t install sketchy software.”

For agencies, this means you need to treat OAuth app authorization like you treat vendor access to your infrastructure. Audit who has access, what they can access, and how long they keep that access. The blast radius from one compromised OAuth app was a major platform breach. That’s not hypothetical, that’s what happened.

The Vercel breach is a data point, not an anomaly. LiteLLM, Axios npm package, Codecov, CircleCI all targeted developer-stored credentials across CI/CD and deployment platforms. This is a pattern, not an incident. Assume your credential stores are under constant low-level probing and build your controls accordingly.

The credentials you stored in Vercel are probably in attacker hands right now. That’s the actual risk. Check your env vars, rotate everything, audit your OAuth apps. Not later, now.

Sources: Vercel Security Bulletin | HN Discussion | Trend Micro Analysis