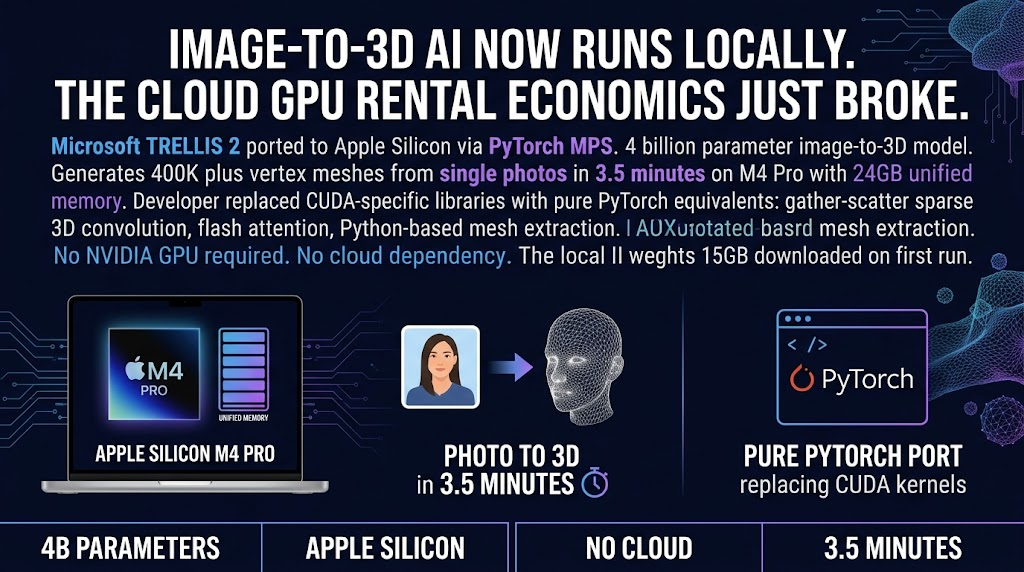

A developer just ported Microsoft’s image-to-3D AI model to Apple Silicon. No cloud required. No NVIDIA GPU needed. Just an M4 Pro and 3.5 minutes.

Microsoft’s TRELLIS.2 is a 4-billion parameter model that converts single photos into 3D meshes. Until last week, you needed CUDA and an NVIDIA GPU to run it. Now you need a Mac with 24GB of unified memory and about 15GB of disk space. The port replaces the CUDA-specific libraries with pure-PyTorch equivalents. Flash attention, sparse convolution kernels, mesh extraction. All reimplemented in a few hundred lines of code.

The output is OBJ and GLB files with PBR materials. Ready for your 3D application of choice.

What This Means for Small Operators

Here’s the angle nobody is talking about yet.

Three years ago, this kind of model required cloud GPU rental. You were paying per-minute for H100 instances, watching your budget disappear while a progress bar crawled across your screen. Then the 70B parameter models started running locally. Then zero-copy GPU inference hit Apple Silicon. Now image-to-3D.

Each step felt incremental. Looking at the pattern from here, it doesn’t feel incremental anymore. It feels like a reset.

If you’re building anything involving 3D content, the economics have fundamentally changed. No per-generation fees. No data leaving your machine. No waiting for a cloud queue. The speed trade-off is real (roughly 10x slower than CUDA for sparse convolution) but for most workflows that need privacy or cost control over speed, this is good enough.

Think about what you could actually ship if the generation step costs you nothing but electricity and time.

The Real Story Is the Porting Work

Here’s the thing that caught my eye.

The developer didn’t use some magical new toolkit. They replaced CUDA-specific operations with standard PyTorch equivalents. Gather-scatter sparse 3D convolution instead of flex_gemm. Python-based mesh extraction instead of nvdiffrast. The result is pure-PyTorch code that Apple Silicon can actually run.

That means this isn’t a one-off miracle. It’s a blueprint. Any CUDA model with similar operations can be ported the same way. The hard parts are identify which kernels are CUDA-only, find or build PyTorch alternatives, and test that the output quality stays acceptable.

The developer did the hard part. Now others can follow the pattern.

For agency operators, this matters because the line between “cloud AI” and “local AI” is getting blurry in real time. The tools you thought required expensive cloud infrastructure are running on hardware you probably already have. The ROI calculation for cloud GPU rental is getting harder to justify with every passing month.

What You Should Actually Do

Before you go download this and try to replace your entire 3D workflow, a few practical notes.

First, you need 24GB of unified memory. Not 16GB. Not 8GB. 24GB minimum, because the model peaks at around 18GB during generation. If you’re on a base model MacBook Pro, this isn’t for you. If you’re on an M4 Pro with 24GB or more, you can run this today.

Second, the output quality has trade-offs. No texture export (vertex colors only) and no hole filling. For some workflows that’s fine. For others it isn’t. Test before you commit.

Third, the commercial license on one of the background models (RMBG-2.0 from BRIA AI) requires a separate commercial license if you’re using it for paid work. Don’t gloss over this if you’re building a service around this.

If you’re doing 3D content work, download the port, run it on a few test images, and see if the output quality works for your clients. If it does, you just eliminated a line item from your production budget. If it doesn’t, you learned something useful either way.

The gap between “works in theory” and “works on my desk” just got a lot smaller.

Sources: GitHub: TRELLIS Mac Port | HN Discussion | Microsoft TRELLIS.2