# Your AI Subscription Is Probably 5x Overpriced

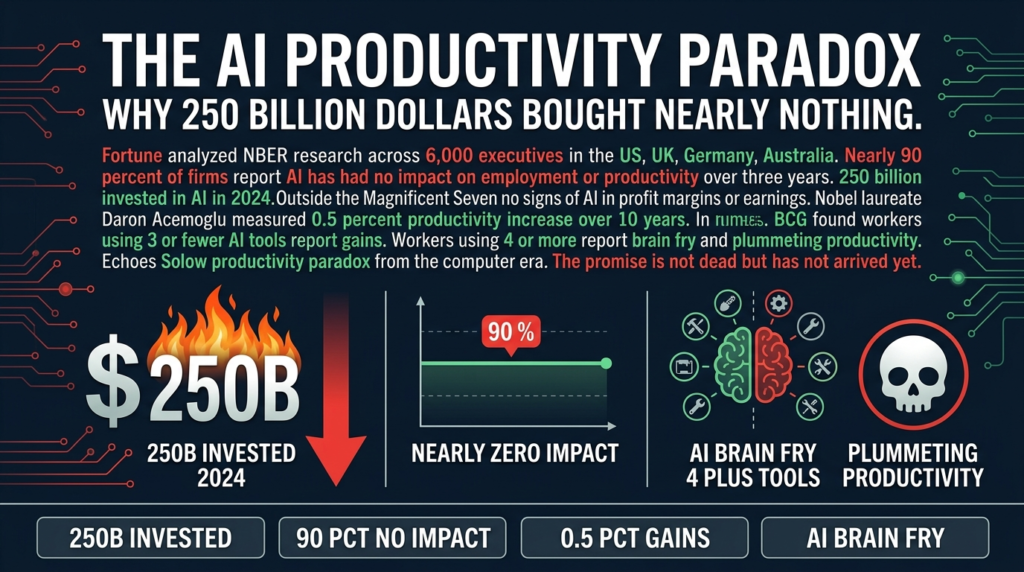

Two Chinese AI labs released frontier-level coding models on the same day. Both claim benchmark leadership. Both are available via API right now. One costs $1.3 million per million tokens. The other costs under a dollar.

That’s the commoditization story in two numbers. And if you’re still paying $100 a month for a single AI subscription while running a small agency or solo operation, you might want to keep reading.

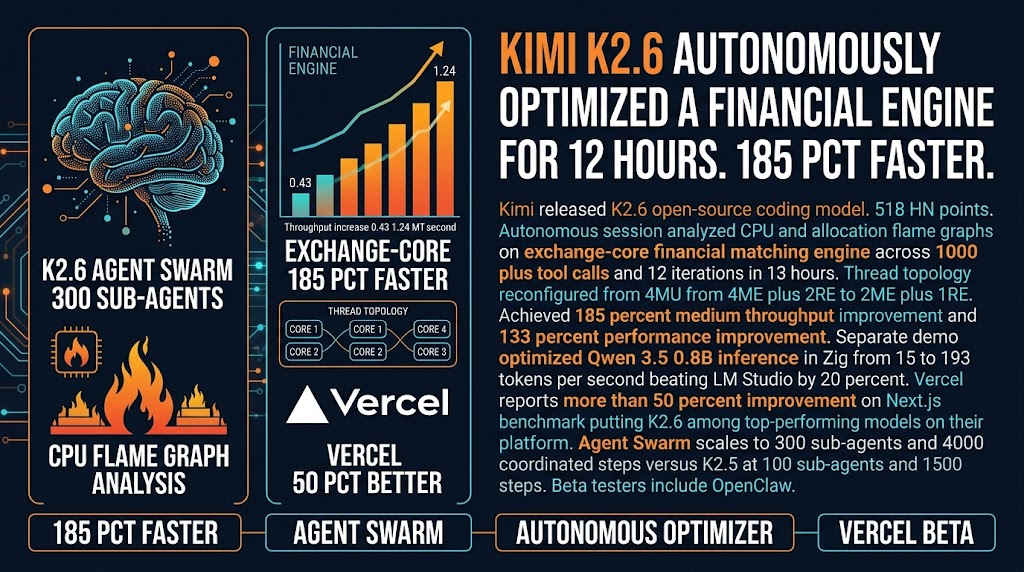

Alibaba dropped Qwen3.6-Max-Preview on April 20th, claiming the highest scores on six major programming benchmarks including SWE-bench Pro and Terminal-Bench 2.0. Within hours, Kimi K2.6 hit HN with similar claims, sparking a 900+ comment thread between the two model fandoms. The programming internet went slightly insane.

Here’s what the benchmark war is hiding.

Most agencies are paying for the wrong things. Premium subscriptions made sense when frontier-level performance required frontier-level prices. That gap is closing fast. Multiple HN commenters report canceling $100+ monthly subscriptions for GLM-5.1 at $20-30 per month and seeing no drop in real-world results. That math doesn’t require a benchmark table to understand.

The Benchmark Trap

The Qwen vs Kimi benchmark comparison that everyone is arguing about? The numbers are Qwen’s own claims. The one third-party comparison I found shows Kimi actually slightly ahead on the two overlapping benchmarks. Both labs released their results within hours of each other on the same day, which is impressive coordination for companies supposedly in a bitter race.

Here’s the thing. Benchmark scores measure a model’s performance on standardized tests. Your work is not a standardized test. The model that wins SWE-bench by a few percentage points might perform differently on your specific codebase, your specific documentation style, your specific client workflows.

For most agency work, the differences between top-tier models are smaller than the differences between how you prompt them, how you structure their inputs, and how you verify their outputs. That’s not a knock on the models. It’s just where the actual leverage is.

The practical implication: stop paying for benchmark bragging rights. Pay for what actually works for your workflows.

What This Actually Means for Small Operators

Here’s the honest picture.

You have real alternatives to premium subscriptions right now. Kimi K2.6 via API costs $0.95 per million input tokens and $4 per million output tokens. Qwen3.6-Max is more expensive at $1.30 and $7.80 respectively, but both are a fraction of what premium subscriptions cost at equivalent performance levels.

The caveat is important. These are API access models, not consumer subscriptions. If you’re using the Claude app or ChatGPT Plus, you’re not directly comparing the same thing. The API gives you more control and typically better rates for high-volume work. The consumer apps give you a UI and more polished experience.

But here’s the thing most people don’t say out loud. For coding-heavy agency work, the API is usually what you want anyway. Higher rate limits, better cost control, easier integration into your workflow.

If you’re doing meaningful volume, you’re probably spending $200-500 per month on AI. Switching to commodity models with equivalent real-world performance could cut that to $40-80. That’s not a rounding error. That’s a budget line item that funds something else or pays down overhead.

The people who should care most: solo operators and small agencies where AI is a significant line item in operations costs. The savings compound when you’re paying these bills yourself instead of having a corporation absorb them.

What You Should Actually Do

Three things, in rough order of priority.

First, run your own evaluation. This is the advice everyone gives and nobody follows, but I’ll say it anyway. Pick two or three tasks that represent 80% of your AI usage. Run the same prompts through your current subscription and one or two commodity models. Compare the outputs blind. Most people are surprised by what they find.

Second, if you’re doing high-volume API work, do the math. Take your monthly spend. Estimate what the same volume would cost on Kimi or Qwen. The difference might fund a contractor for a month, or at minimum, fund better tools elsewhere.

Third, watch the pricing curve. Qwen and Kimi releasing within hours of each other on the same day is a signal. The commoditization is accelerating. The model you build your workflow around today will face serious competition from something cheaper and faster within months. Build abstractions where you can. Don’t hard-code to a single provider.

The benchmark wars are entertaining. But they’re someone else’s problem. Your problem is whether you’re paying too much for results you could get elsewhere. That question is answerable today.

Sources: HN Discussion: Qwen3.6-Max-Preview | Lush Binary: Qwen3.6-Max-Preview vs Kimi K2.6 | Qwen on Hugging Face