4.3 hours. That’s how long Xiaomi’s MiMo-V2.5-Pro took to write a complete compiler from scratch. No team. No sprint planning.

just a model pointed at an empty directory.

One trillion and twenty billion parameters.

Forty-two billion active per request. One million token context window. Open weights on Hugging Face. Score of 78.9 on SWE-Bench Verified. Alibaba dropped Qwen3-Coder-Next the same day. Eighty billion total parameters, three billion active per token, runs on a single consumer GPU.

two Chinese labs out-engineered the entire Western closed-model ecosystem in 24 hours.

And nobody’s talking about what this means for your token budget.

Here’s the Token Math Nobody’s Showing You

you’re paying $15 per million tokens for Claude Opus. Xiaomi’s own numbers say MiMo-V2.5-Pro uses 40 to 60% fewer tokens than Claude Opus for comparable coding performance.

Do the math. Same task. Half the tokens. A fraction of the cost.

The Western model companies built a business on quality and price moving together.

Better model, higher price. That coupling is gone now. Open-weight models from Chinese labs are hitting the same benchmark scores at prices that make $15/M look absurd.

i’ve been saying this to every shop that’ll listen. Most of them haven’t tested the alternatives because it’s easier to keep paying the bill they know than figure out the new stack.

The 3B Model Nobody’s Talking About

MiMo’s trillion parameters make the headlines.

Qwen3-Coder-Next is the one that should keep you up at night.

Eighty billion total parameters.

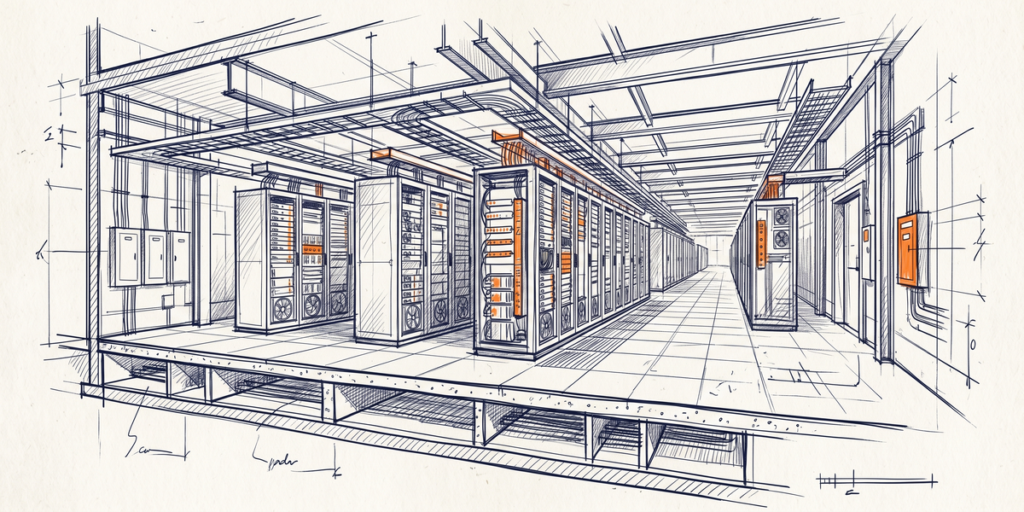

Three billion active per token. Frontier-tier coding performance. Runs locally. No API. No per-token billing. No rate limits.

A small team that couldn’t afford Claude Opus or GPT-5.5 for every task now has a model they can run on their own hardware. For maybe $0.0001 per call once you’ve bought the GPU.

The catch is hardware.

Eight hundred and ninety-three gigabytes on disk. But the 3B active design means it’s actually feasible on decent consumer GPUs now. That’s the part that changed this week.

The Stack Nobody’s Building Yet

Hacker News has developers running MiMo for long autonomous tasks, Qwen3-Coder-Next for cheap local execution. And saving Claude for the problems that actually need frontier reasoning.

Most small shops haven’t built a multi-model routing layer as the tooling felt complex and the price pressure wasn’t high enough.

Both constraints changed this week.

Route the straightforward stuff to the cheap open models. Route the hard problems to Claude or GPT only when you actually need them. Your API bill drops 60 to 80% overnight.

The Move This Week

Download Qwen3-Coder-Next. Spin it up. Run your actual codebase against it, not the benchmark.

If you’re paying for API calls and haven’t tested MiMo or Qwen3 on your real work, you don’t know what your actual cost structure looks like. The benchmark numbers are nice.

Your task performance is what matters.

The shops that figure this out in the next 30 days will have a cost advantage when the next price cycle hits. The ones who keep paying Claude and OpenAI rates since it’s comfortable will be the ones asking why their margins disappeared.

—

Sources

– The Decoder. Xiaomi’s MiMo-V2.5-Pro Takes Aim at Claude Opus

– Hacker News. MiMo and Kimi K2.6 Discussion

– DataLearner — Qwen3-Coder-Next 80B A3B Open Source