April 28th. That’s when this dropped.

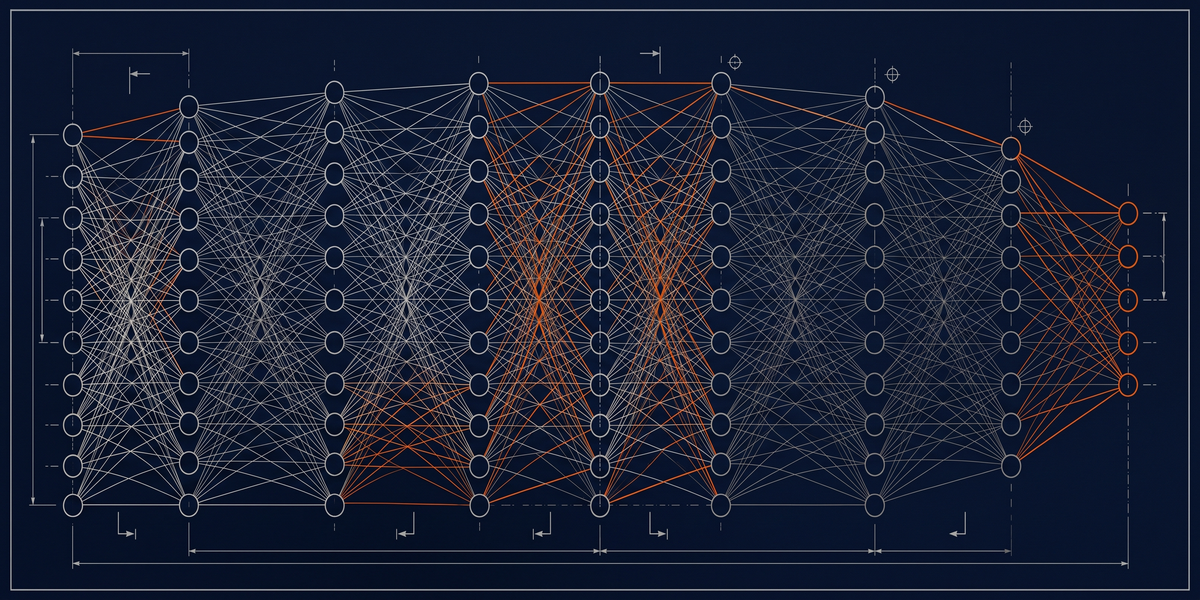

Yeah, xiaomi’s MiMo-V2.5-Pro hit Hugging Face. 1.02 trillion parameters, open weights, scored 78.9 on SWE-Bench Verified.

Alibaba dropped Qwen3-Coder-Next the same day, 80B total, 3B active per token, matching DeepSeek V3.2’s coding benchmark score. In one calendar day, the open-weight ecosystem jumped six months forward.

Nobody in the Western tech press covered it like it was urgent.

They should have.

You’re Not Paying for Quality. You’re Paying for Burn Rate.

I keep seeing this conversation framed wrong.

People talk about which model is best.

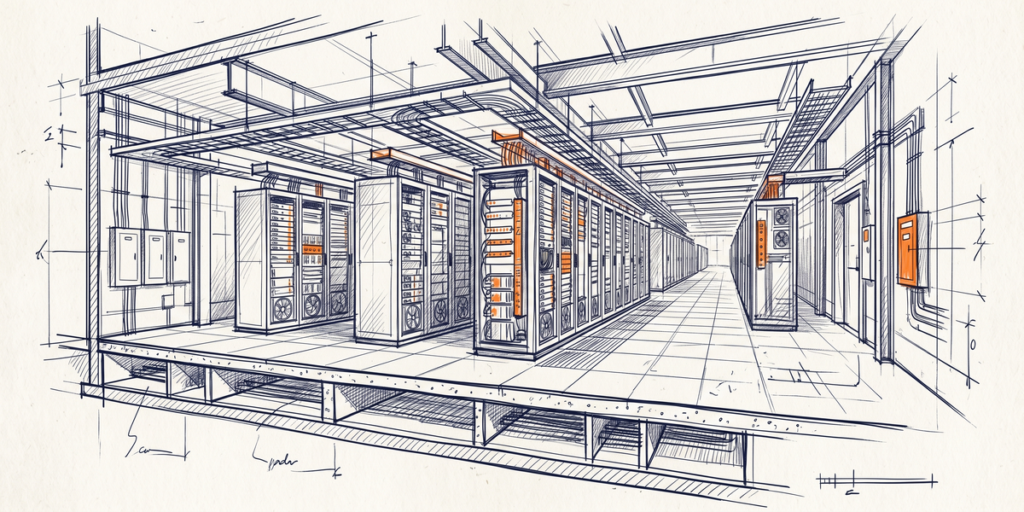

Wrong axis. The axis is cost per useful output. Right now there’s a 100x spread between the cheapest frontier-tier model and the most expensive. DeepSeek V4-Flash is $0.14 per million tokens input. Claude Opus on Bedrock is $15. Same million tokens. That’s not a small business problem. That’s a margin problem for every shop running volume AI workloads.

MiMo-V2.5-Pro’s open weights change the equation differently than people are saying. You’re not paying API pricing at all. You pay for the GPU you already own or the cloud instance by the hour. At 40 to 60% fewer tokens burned for equivalent performance versus Claude Opus, the effective cost per task is drastically lower. Not zero. Lower.

Western closed-model companies built their business on “better model = higher price.” That coupling is breaking in real time.

The Model Nobody’s Talking About Is the More Important One

MiMo’s getting the coverage. Qwen3-Coder-Next is the one that should keep you up.

80 billion total parameters.

Three billion active per request. You can run it on a decent consumer GPU. It scores 70.6 on SWE-Bench Verified. That’s essentially matching DeepSeek V3.2’s benchmark from a model that fits on local hardware. No per-token billing. No API rate limits. No vendor dependency.

Yeah, for a solo operator who’s been priced out of running Claude Opus for every task, this is a real option now. Not theoretical.

Deployable this week if you’ve got the hardware.

Side note: the 963GB MiMo download size is a real barrier for most people.

Yeah, qwen3-Coder-Next at 80B is way more accessible for smaller teams. Don’t sleep on the practical difference there.

The Multi-Model Stack Is Already Being Built by People Ahead of You

Hacker News thread on MiMo turned into a comparison of all four major Chinese open models. The comment that stuck with me: a developer said they’re running personal coding plans across MiMo, Kimi K2.6, DeepSeek V4. And GLM-5.1 simultaneously and getting “a lot of bang for the buck.”

That’s not one model.

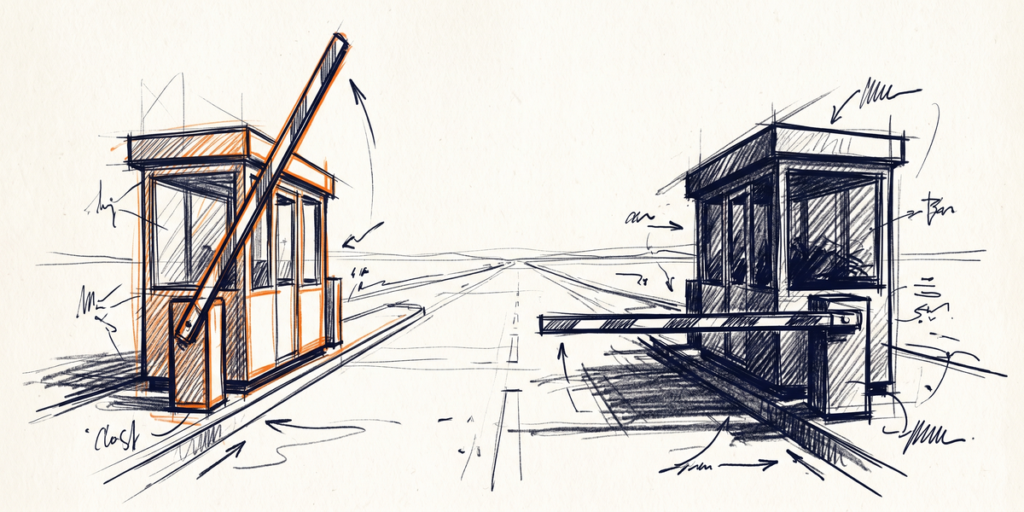

That’s a routing stack.

Most small shops haven’t built this because the tooling felt complex and the price pressure wasn’t urgent enough.

Both just changed. You don’t need to rewrite your product. You need to route smarter. Cheap, predictable tasks go to the $0.14/M models. Expensive tasks go to the expensive models only when the cheap ones genuinely can’t handle it.

If you’re burning Claude Opus for FAQ routing, you’ve got the wrong model and the wrong budget allocation.

What You Do With This

Honestly, run Qwen3-Coder-Next against your actual codebase this week. Not a benchmark. Your real code. If it handles 70% of your tasks adequately, route those 70% away from your paid API budget immediately. That’s real money saved that you can measure.

The shops figuring this out in the next 30 days will have a structural cost advantage.

The ones still paying $15/M tokens for tasks that Qwen3 handles fine will be the ones writing sad threads about their margins.

—

Sources

– The Decoder. Xiaomi’s MiMo-V2.5-Pro Takes Aim at Claude Opus

– Hacker News. MiMo and Kimi K2.6 Discussion

– DataLearner — Qwen3-Coder-Next 80B A3B Open Source