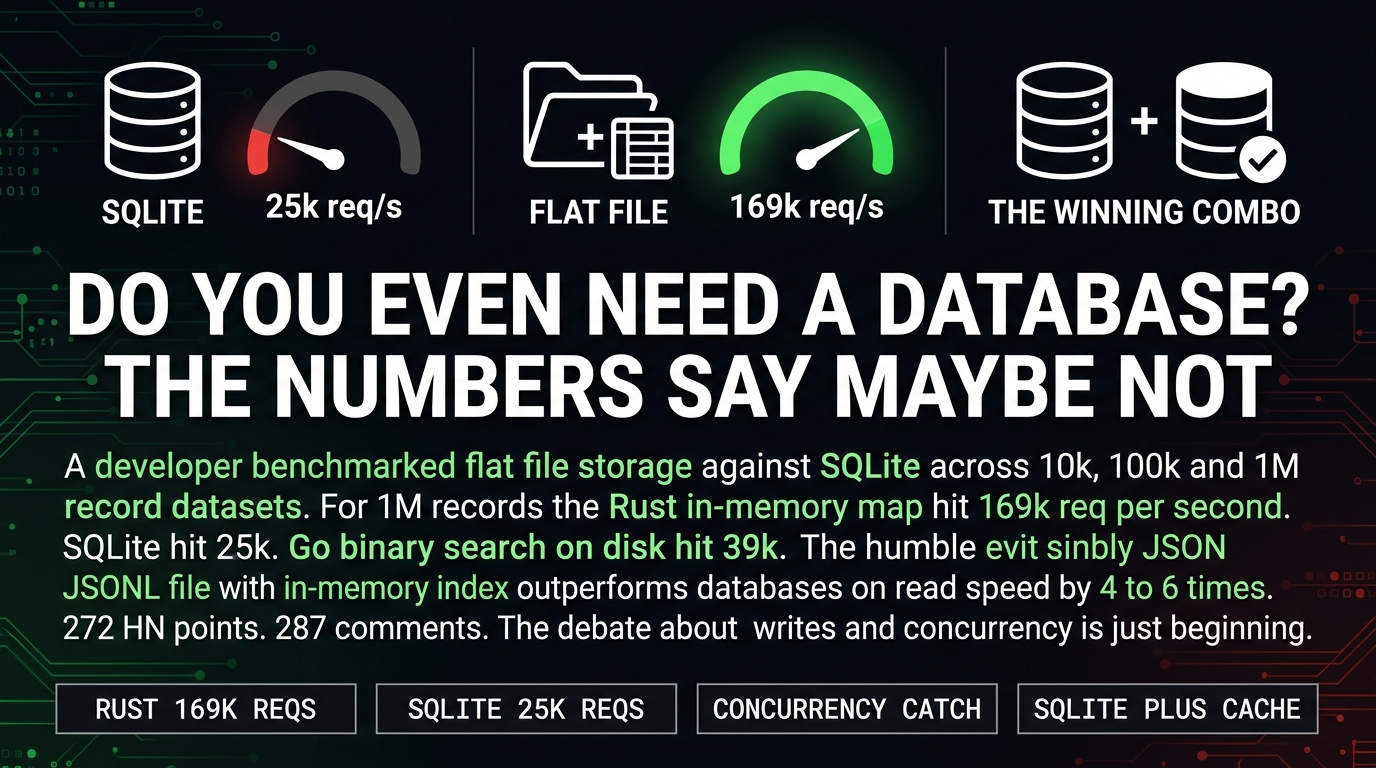

A developer ran benchmarks comparing three flat-file storage approaches against SQLite. For 1M records, a simple in-memory hash map backed by a JSONL file hit roughly 169,000 requests per second in Rust. SQLite hit 25,000. That is a 4 to 6x gap on reads.

The HN thread hit 272 points with 287 comments. Most of the comments are arguing about whether the benchmark is fair. That is the wrong argument.

The right question is what you are actually building and whether your architecture matches your requirements.

For most side projects, MVPs, and internal tools, those capabilities are not necessary. They are just there, adding complexity you did not evaluate.

The Benchmark Is Real. The Caveats Are Also Real.

The author tested three flat-file approaches against SQLite across 10k, 100k, and 1M record datasets. Linear scan, in-memory hash map, binary search on disk. The results held across all three scales.

The in-memory hash map approach wins on reads because it is doing exactly what you would expect. You load the file once into memory, build a hash index, and serve reads from RAM. SQLite has to query a file on disk, manage ACID guarantees, and handle write-ahead logging even when you are only reading.

It gets worse for SQLite on read-heavy workloads. Every read has to go through the query engine, the buffer pool, and the file system. The flat file approach skips all of that.

But here is the caveat that matters in practice. Concurrent writes from multiple processes create file locking problems that databases handle gracefully out of the box. The moment your cron job and your web server and your queue worker are all hitting the same file, you need something more than a mutex.

The thread consensus is reasonable. SQLite with an in-memory cache is the practical middle ground for most applications. You get ACID properties and concurrent write handling with read performance that rivals the custom flat-file approach.

The Real Problem Is Default Behavior

I build small agency work. Client projects, internal tools, automation workflows. The default stack for most of what I see is PostgreSQL because that is what Render and Railway and Fly.io default to. Nobody asked whether the application actually needs it.

The hidden cost of that default is not in the hosting bill. It is in the architecture decisions that compound from the first commit. Foreign key constraints. Normalized tables. ORM layers. Query optimization. Every one of those decisions makes sense at scale and adds friction at the beginning.

The flat file approach forces simplicity. You write JSON to a file. You load it into memory. You serve reads. The constraints that emerge from that simplicity are not limitations. They are the actual requirements of the problem you are solving.

If your application does not need concurrent writes from multiple processes and your data fits in RAM, SQLite with an in-memory cache is probably all you need. The fact that this sounds like a controversial statement tells you everything about how badly the defaults have drifted.

What To Actually Do

For your next side project, do not default to PostgreSQL. Default to SQLite with an in-memory cache and only add a database when you hit a specific constraint that SQLite cannot handle. The constraint might never come.

For client work, have the conversation early. Ask what the actual data requirements are. Ask how many concurrent writes the application will handle. Ask whether the client has existing infrastructure they are already paying for. Most of the time the answer is small enough that SQLite is the right choice.

The architecture that is easy to change is the one you did not overengineer from the start.

Sources:

– Do You Even Need a Database — DBPro

– Hacker News Discussion