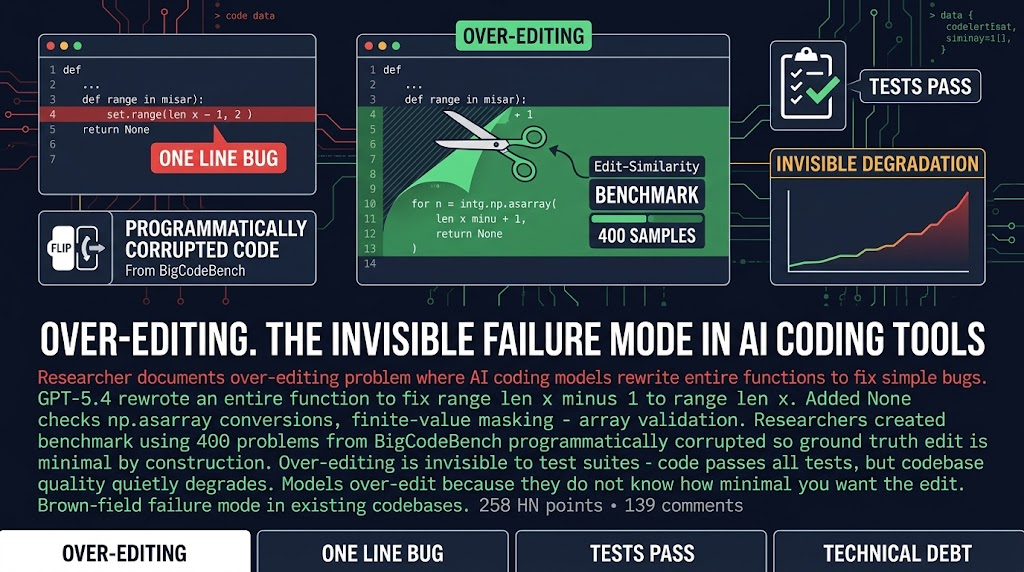

Your AI coding tool just rewrote an entire 200-line function to fix a single off-by-one error. Tests pass. Diff looks reasonable. Ship it.

Except something quietly broke. The function is now 340 lines. It has None checks, np.asarray conversions, finite-value masking, array validation that wasn’t there before. You asked for one line change. The model gave you 140 lines of defensive coding you didn’t ask for, didn’t need, and now have to maintain.

That’s the over-editing problem. A researcher documented it this week with a benchmark. 400 programmatically corrupted code samples, an Edit-Similarity metric, and the conclusion that frontier models consistently rewrite entire functions when a single line fix would do. This isn’t a correctness failure. The code works. It’s an efficiency failure that accumulates quietly.

Why Your Diff Looks Fine But Your Codebase Is Getting Worse

Here’s the thing nobody wants to say out loud.

Over-editing is invisible to test suites. The code passes every test. The diff looks reasonable in the pull request. Nobody flags it because there’s nothing to flag. The model fixed the bug. The tests pass. What are you going to say, “please make the same fix but with a smaller diff?”

But your codebase is getting more complex with every AI-assisted commit. Each over-edit adds edge cases, defensive logic, validation that wasn’t there and wasn’t asked for. That complexity compounds. Two years from now, someone will debug this function and wonder why it handles 47 different None scenarios for a calculation that should never produce None. That’s your AI’s fault. It over-engineered the fix for a one-line bug.

Here’s the thing. Models over-edit partly because they don’t know how minimal you want the edit to be. Current models improve for correctness and test passage, not for minimal edit distance. When they see existing code, they assume the existing code is fragile and needs defensive hardening. That assumption is usually wrong.

The Workaround Nobody Is Talking About

One HN commenter reported a pattern that actually works. Explain the mistake to the model. Have it fix the bug. Then ask it to record what it learned in project-specific skills. The next time a similar context comes up, it doesn’t make the mistake again.

That’s not a fix. It’s a workflow. And it requires you to actually review what the model is doing and catch the pattern. But it’s something.

The uncomfortable question is harder. If over-editing is invisible to tests, how do you know if your codebase quality is quietly degrading? Every AI-assisted commit that passes tests but rewrites more than necessary adds complexity that will compound. For agencies, this means your “AI velocity” might be accumulating technical debt you’re not measuring.

The code ships faster. The diff looks fine. The codebase quality is quietly getting worse.

What You Should Actually Do About It

Three things, in order.

First, start reviewing AI-assisted diffs for edit size, not just correctness. When you see a massive diff on a simple bug fix, that’s over-editing. Push back. “Fix the bug, don’t rewrite the function.” Explicit instructions like “make minimal edits only, fix the bug and nothing else” reduce but don’t eliminate the problem.

Second, start building project-specific skills for repeated patterns. If your AI keeps over-editing a specific type of function, document the correct pattern. Tell it what you expect. This is tedious but it compounds. Every skill you add reduces future over-editing in that context.

Third, start measuring your codebase complexity over time. Lines of code per function, cyclomatic complexity, None check density. Whatever you use, establish a baseline now so you can see if it drifts as AI-assisted commits accumulate. This is the only way to catch the invisible debt before it becomes unmanageable.

The real fix is probably in the frontier models. When they understand project context and can calibrate their edit aggressiveness to the actual scope of the problem, over-editing becomes a solved problem. Until then, you need to actively manage it. Your diff reviews need to catch edit size, not just correctness. And you need to be willing to push back when the model fixes a one-line bug by rewriting the entire function.

Sources: HN Discussion | Researcher Blog