Project Glasswing showed up in an Anthropic publication this week and the security community took it seriously. That part is straightforward. Glasswing is an AI system designed to find memory safety bugs and architectural flaws in critical software infrastructure using language model-guided analysis. When a major AI lab publishes a system designed to catch vulnerabilities that cause real-world breaches, people pay attention.

The thread on HN hit 462 points with over 300 comments. The technical discussion is genuine. Security researchers are asking whether this finds things fuzzing misses, whether memory-safe languages make the whole category less relevant, and whether the architecture-level finds are the real prize.

Then there is the other conversation in the thread.

The Threat List

The Glasswing document names China, Iran, North Korea, and Russia as state-sponsored cyber threats. It describes their capabilities, their historical operations, and the kind of attacks Glasswing is designed to prevent.

The United States is not on that list.

Anthropic is currently suing the US government over its designation as a potential national security risk. The company has been blunt in public about its disagreement with how the Commerce Department classified it. That case is active.

The irony landed immediately in the comments. One person posted the obvious observation: a large American AI company does not list the US as an adversarial actor, in a document that otherwise catalogs nation-state threats in detail. Another pointed out that Anthropic’s legal position and this threat list are in direct tension. The company is arguing the government is wrong about Anthropic being a security risk, while simultaneously writing a paper that treats US cyber capabilities as neutral rather than threatening.

This is not a conspiracy. It is probably just internal teams not coordinating. But the result is a document that reads oddly if you know both stories.

What Glasswing Might Actually Change

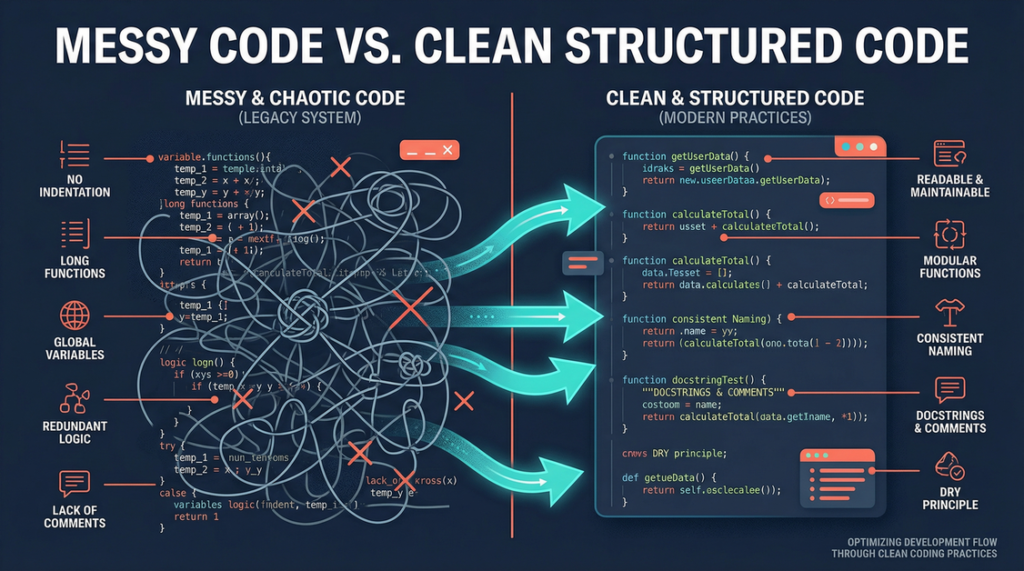

Setting aside the framing, the technical promise is real. Language model-guided analysis can find architectural flaws that automated tools miss. Fuzzing finds bugs by throwing random inputs at code. Static analysis finds bugs by pattern matching. Neither easily catches the class of vulnerability that comes from a misunderstanding of how components interact across a system boundary.

If Glasswing genuinely finds that category at scale, the downstream implications are significant. Memory safety bugs in critical infrastructure are the source of a large percentage of exploited vulnerabilities. If AI-driven analysis makes those bugs nearly extinct, the attack surface changes. State actors who have invested heavily in memory corruption exploits would need to shift strategy.

One commenter noted this could effectively wipe out the commercial spyware industry. NSO Group and similar vendors build their business on memory safety vulnerabilities in widely-deployed software. Eliminate those vulnerabilities and their product line breaks.

That is a genuinely large claim. Whether Glasswing delivers it remains an open question.

The Apple Variable

The same thread raised an interesting complication. Apple shipped hardware memory tagging on iPhone 17 and built Lockdown Mode as a hardening layer for high-risk users. Both are real mitigations that reduce the value of the kind of bugs Glasswing targets.

Platforms that have already invested heavily in memory safety do not get the same benefit from Glasswing-style analysis. The bugs that Glasswing finds best are the ones that still exist in systems without those mitigations. The long-term impact of the tool depends partly on how widely memory-safe systems and hardware protections spread.

The Subtext Nobody Is Ignoring

Glasswing arrived a week after AI red-teaming found Linux kernel RCEs, Anthropic blocked OpenClaw from subscription access, and the company was in the middle of a source leak crisis. The security research community spent two weeks demonstrating that AI can find critical vulnerabilities in foundational software. Anthropic’s response is a product built partly from those same capabilities.

Whether that timing is coincidence or message discipline is impossible to know. The technical work appears legitimate. The geopolitical framing is what it is. The security community is engaged with both conversations simultaneously, which is probably the right way to read it.

Sources:

– Anthropic — Mythos Preview / Glasswing

– Hacker News Discussion

– Follow-Up Cybersecurity Assessment Thread (HN)